Apple to bring air gestures on Mac for UI nagivation

Apple has been awarded a patent for air gesture-based UI navigation on the Mac using technology that looks, feels, and behaves quite similar to Microsoft’s original Kinect.

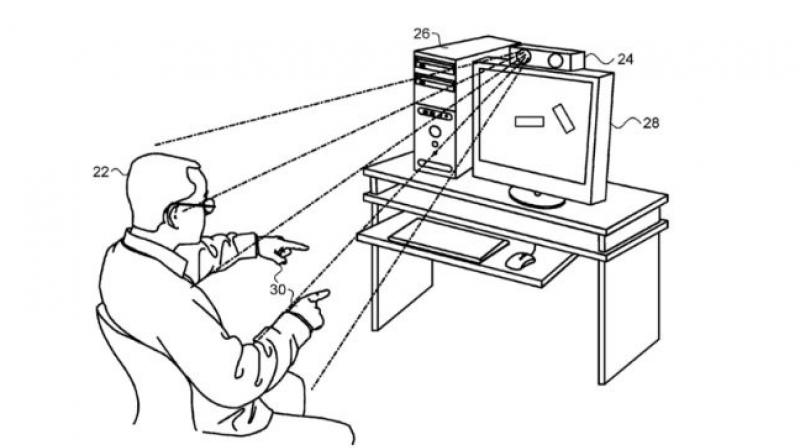

The patent called “three dimension user interface session control using depth sensor” describes a way to access certain Mac features without even touching the screen, but only using hand gestures. The abstract section of the patent provides more info in this regard:

“A method, including receiving, by a computer executing a non-tactile three dimensions (3D) user interface, a set of multiple 3D coordinates representing a gesture by a hand positioned within a field of view of a sensing device coupled to the computer, the gesture including a first motion in a first direction along a selected axis in space, followed by a second motion in a second direction, opposite to the first direction, along the selected axis. Upon detecting completion of the gesture, the non-tactile 3D user interface is transitioned from a first state to a second state.”

The patent further details that how the whole gestures would work, adding that users could even be allowed to unlock Macs with the help of a pre-defined gesture, just as “raising a hand at a specified distance, a sequence of two sequential wave gestures, and a sequence of two sequential push gestures.”

There is one key detail in the whole case i.e. the patent was submitted by PrimeSense, a company now owned by Apple and which developed the original Xbox sensor.

Members of the PrimeSense team contributed to the development of Apple’s TrueDepth camera system, and the company might now be looking into ways to implement their own tech into more products.

As with everything that’s still in the patent stage, there’s no guarantee such features could ever launch on a device available publicly, but it does provide us with a glimpse into how the company imagines the future of its Mac.

with inputs from Softpedia