MIT researchers create system that generates videos of the future

Given a still image, CSAIL deep-learning system generates videos that predict what will happen next in a scene.

It can some times get easy to forget how effortlessly we understand our surroundings especially given the dynamic physical world we live in. We can figure out how scenes change and objects in our environment interact. However, what is second nature for us is still a huge problem for machines. With the limitless number of ways that objects can move, teaching computers to predict future actions can get difficult.

Researchers from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have moved a step closer, developing a deep-learning algorithm that, given a still image from a scene, can create a brief video that simulates the future of that scene.

The team says that future versions could be used for everything for everything from improved security tactics and safer self-driving cars. According to CSAIL PhD student and first author Carl Vondrick, the algorithm can also help machines recognize people’s activities without expensive human annotations.

“These videos show us what computers think can happen in a scene,” says Carl Vondrick, CSAIL PhD student. “If you can predict the future, you must have understood something about the present.”

Vondrick wrote the paper with MIT professor Antonio Torralba and Hamed Pirsiavash, a former CSAIL postdoc. The work will be presented at next week’s Neural Information Processing Systems (NIPS) conference in Barcelona.

How it works?

Torralba’s model generates completely new videos that haven’t been seen before. This work focuses on processing the entire scene at once, with the algorithm generating as many as 32 frames from scratch per second.

“Building up a scene frame-by-frame is like a big game of ‘Telephone,’ which means that the message falls apart by the time you go around the whole room,” says Vondrick. “By instead trying to predict all frames simultaneously, it is as if you’re talking to everyone in the room at once.”

To create multiple frames, researchers taught the model to generate the foreground separate from the background, and to then place the objects in the scene to let the model learn which objects move and which objects don’t.

The team used a deep-learning method called ‘adversarial learning’ that involves training two competing neural networks. One network generates video, and the other discriminates between the real and generated videos. Over time, the generator learns to fool the discriminator.

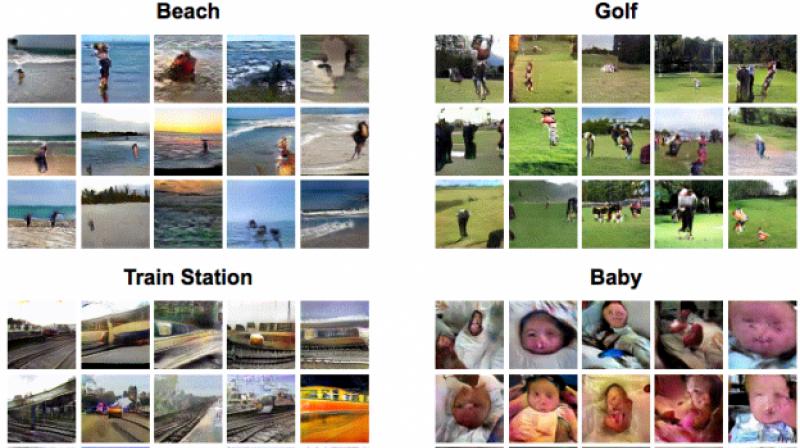

From that, the model can create videos resembling scenes from beaches, train stations, hospitals, and golf courses. For example, the beach model produces beaches with crashing waves and the golf model has people walking on grass.

Vondrick stresses that the model still lacks some fairly simple common sense principles. For example, it often doesn’t understand that objects are still there when they movie, like when a train passes through a scene. The model also tends to make humans and objects look much larger in size than reality.

Another limitation is that the generated videos are just one and a half seconds long, which the team hopes to be able to increase in future work. The challenge is that this requires tracking longer dependencies to ensure that the scene still makes sense over longer time periods. One way to do this would be to add human supervision.

“It’s difficult to aggregate accurate information across long time periods in videos,” says Vondrick. “If the video has both cooking and eating activities, you have to be able to link those two together to make sense of the scene.”

These types of models aren’t limited to predicting the future. Generative videos can be used for adding animation to still images, like the animated newspaper from the Harry Potter books. They could also help detect anomalies in security footage and compress data for storing and sending longer videos.

“In the future, this will let us scale up vision systems to recognize objects and scenes without any supervision, simply by training them on video,” says Vondrick.

This work was supported by the National Science Foundation, the START program at UMBC, and a Google PhD fellowship.