A new age of smart devices is here

Human beings experience a range of emotions on a daily basis and offer gestures in return. In a digital society it is certain that the humans will communicate with humanoid robots or conversational agents (avatars) in the future.

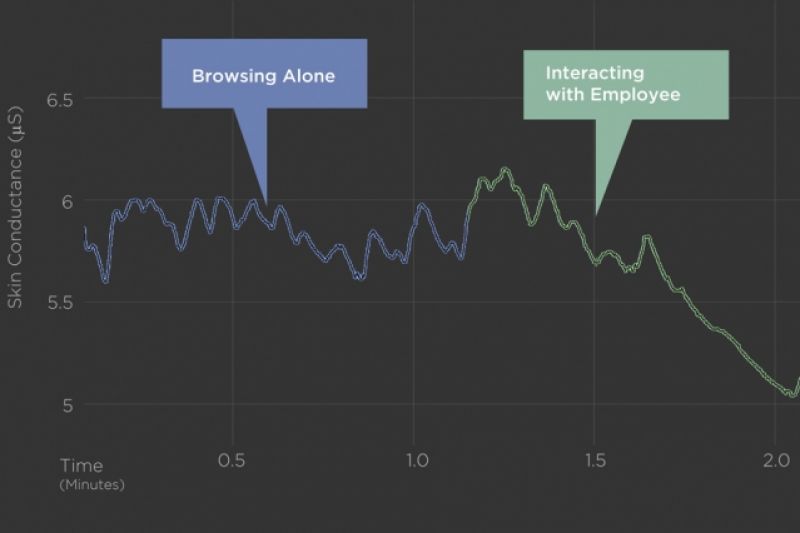

While we are at it, MIT Media Lab spinout mPath is able to pinpoint the exact moment consumers feel these subconscious responses. The start-up has brought some interesting insights to light, which will help them refine their services and products.

mPath has developed a new approach called ‘emototyping,’ a process that brings together the stress sensors with eye-tracking glasses or the GoPro cams to identify where or what a person looked at the exact moment of an emotional point.

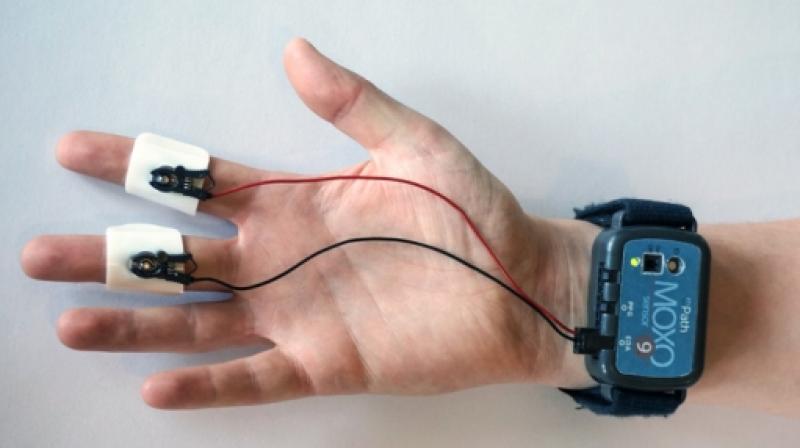

The MOXO sensor was used here is a smart wearable that appears to be a unwieldy smartwatch. The sensor can measure changes in skin conductance, when placed on the wrist. This numbers reflect sympathetic nervous system activity and physiological stimulation. This whole process provides a more in-depth and precise profile of the user than the conventional market research that only involves interviews and occasionally video analysis.

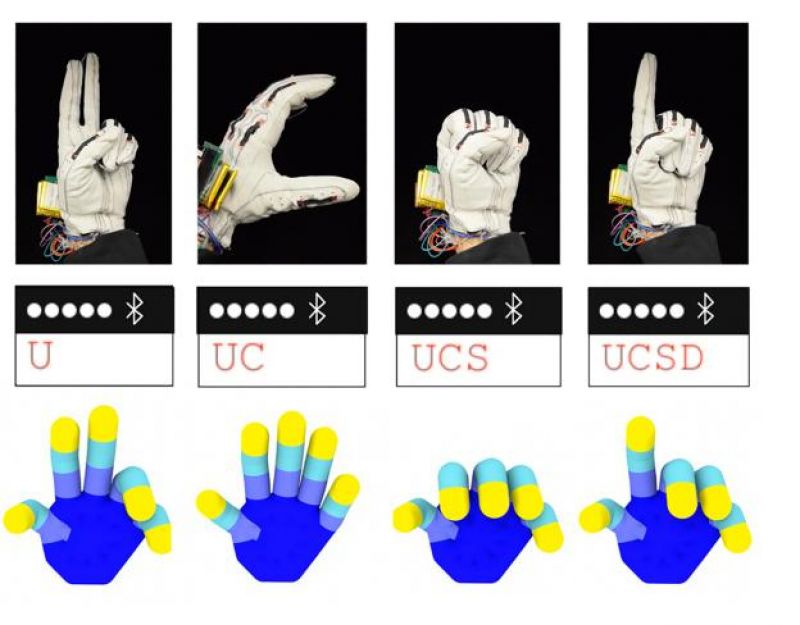

Another example of how the smart devices are becoming a vital part of this generation is a smart glove developed by researchers at UC San Diego that is capable of translating the American Sign Language (ASL) alphabet into digital text.

What’s more surprising is that the glove was created using less than $100 worth of components. It packs in two flexible strain sensors on each on each finger and one placed on the thumb. Based on the applied force to the sensor, the sensors measure the change in electrical resistance.

Each letter in the ASL alphabet creates a different combination of electrical resistance, every time a user signs using the gloves. Based on these combinations, the smart device mounted on the glove determines which letter the wearer is signing. The information is then sent to a smartphone via Bluetooth, automatically converting the sign letter into readable text.

Researchers believe that this is the most versatile device that translates sign language into text; however it is not the first one to attempt so. An all-deaf team of entrepreneurs from the Rochester Institute of Technology developed the MotionSavvy Uni, a tablet case embedded with Leap Motion's gesture-sensing technology. The device enabled true conversation between a deaf person and a hearing person by transcribing spoken word into text so it can be easily read.

The researchers also believe that the glove's use as an ASL translator is only the beginning. The glove is also seen as an interface for virtual environments and could also come in handy for VR gaming and controlling drones in the future.